Everyone's treating AI autonomy as a ladder. What if it's a dial?

There's an assumption buried in almost every AI product pitch right now: more autonomy is better. That the goal is to move from "AI assists you" to "AI handles it", and that human involvement is friction to be eliminated.

Stripe's annual letter this month crystallised that assumption perfectly. John Collison described five levels of "agentic commerce", a framework presenting AI autonomy as a ladder from basic automation to full anticipation. As he noted, most of the hype focuses on the later levels, but real activity is already happening at levels 1 and 2. It's a brilliant framework. And almost everyone will read it as a staircase to climb.

https://twitter.com/collision/status/2026332177118732364

The same month, Mercedes-Benz and BMW both abandoned Level 3 self-driving, going backwards to a system that keeps the human more involved, not less.

One industry is building the ladder. Another just discovered the middle rungs don't hold.

The question is whether autonomy is a ladder at all, or whether it's something you should be adjusting based on context.

The Stripe framework (and why it matters beyond shopping)

In their 2025 annual letter, Stripe co-founders Patrick and John Collison laid out five levels of what they're calling "agentic commerce." It describes how purchasing decisions progressively shift from human to AI:

- Eliminating web forms. You decide what to buy. The AI handles checkout.

- Descriptive search. You describe what you want in plain language instead of using keyword search.

- Persistence. The system remembers your preferences across interactions.

- Delegation. You set objectives and budget. The system handles everything else.

- Anticipation. There is no prompt. The system acts proactively based on what it thinks you need.

Stripe built this for e-commerce ($159 billion valuation, $1.9 trillion in payment volume, roughly 1.6% of global GDP). They launched an Agentic Commerce Protocol with OpenAI and a suite letting brands like Anthropologie, Etsy, and Coach sell through AI interfaces.

But strip away the commerce layer and what you're left with is a clean description of how agency transfers from human to machine in any domain. Research. Writing. Strategy. Operations. Hiring. Campaign management.

It's a genuinely useful framework. But it has a hidden assumption baked in: that Level 5 is the destination. That progress means climbing. That more autonomy is always the goal.

Every AI company pitches it this way. We're at Level 2 now, but we're building towards Level 4. Our roadmap takes you from co-pilot to autonomous agent. The direction is always the same: more autonomy, less human involvement, onwards and upwards.

The self-driving car industry believed the same thing for years. And they had to learn, at considerable cost, that it's wrong.

What the car industry learned the hard way

The Society of Automotive Engineers published a framework for autonomous driving that looks a lot like the Collison model. Six levels, from zero (no automation) to five (full automation). And for years, every car manufacturer treated it as a ladder.

The plan was to climb from Level 2 (cruise control and lane keeping, human actively supervising) through Level 3 (car drives itself in defined conditions, human ready to intervene) up to Level 4 and 5 (full autonomy, no human needed).

Then something became clear. Level 3 was where the problems lived.

Not Level 1, which is too basic to cause harm. Not Level 5, which is genuinely capable. Level 3, the middle of the ladder, where the system is competent enough that you stop paying attention but not competent enough to not need you.

Researchers had flagged this decades earlier. Parasuraman and Riley published a foundational paper in 1997 showing that the more reliable automation becomes, the more likely humans are to become complacent monitors. The system handles 95% of situations perfectly, so your brain checks out. And then the 5% arrives and nobody's at the wheel.

A 2023 study in Safety Science put it bluntly: the entire concept of a "fallback-ready user" is essentially a contradiction. You can't ask someone to monitor a system they've been told they don't need to operate. The brain doesn't work that way.

Level 3 didn't fail because the technology wasn't ready. It failed because the monitoring problem has no clean solution. The human is supposed to be "ready to intervene" while the system handles everything. But the more competent the system appears, the more supervision decays. That's not a design flaw you can fix. It's how human attention works.

Mercedes-Benz was the first manufacturer to ship a commercially available Level 3 system. They called it Drive Pilot. It was restricted to highway driving under 40 mph, essentially traffic jams only, and it required a $2,500 annual subscription on top of an already expensive car. That's how narrow the use case had to be to manage the risk.

Then, in January 2026, Mercedes removed Drive Pilot from the facelifted S-Class entirely. Their spokesperson was candid: they didn't want to offer a system with limited customer benefit when a better approach was coming within two to three years. In February, BMW followed, discontinuing its own Level 3 "Personal Pilot" system from the 7 Series facelift.

Both companies made the same move. They went backwards, from Level 3 to what they're calling Level 2++. More capable driver assistance that works in cities and highways, but with the human actively engaged: hands on wheel, eyes on road.

And here's the detail that matters most: Mercedes' CEO described the Level 2++ experience as better. Ola Källenius tested it in San Francisco and said it felt like "the car is on rails." He drove for over an hour through heavy city traffic, onto the freeway, and back into the city. The system handled everything, with him actively engaged throughout.

Going back to Level 2 wasn't a retreat. It was a discovery: keeping the human engaged produced a better outcome than asking them to passively monitor.

Meanwhile, the companies that skipped Level 3 entirely went the other direction. Waymo built fully autonomous Level 4 systems, but only in extremely narrow, pre-mapped domains. Robotaxis in specific cities. Not full autonomy everywhere. Full autonomy in a tiny, well-defined box.

Nobody stayed at Level 3. The industry split in two: back to smarter L2 for open-ended driving, forward to L4 in tightly bounded domains. The middle of the ladder emptied out.

The AI industry is in the dead zone and hasn't noticed

Most AI systems are technically Level 2. They propose, you approve. The copilot suggests code, you accept or reject. The chatbot drafts a paragraph, you edit or rewrite. The human is supposed to be actively engaged.

But behaviourally, that's not what's happening.

Anthropic published research recently showing that new users let Claude work unsupervised about 20% of the time. By 750 sessions, that figure rises to over 40%. The more people use AI, the less they check its work. Not because they've decided the AI is infallible. Because it's good enough, often enough, that their attention drifts.

That's Level 3 by behaviour, regardless of what the product was designed to be. And the more competent the system appears, the faster supervision decays.

I wrote recently about how AI's intelligence is upside down: brilliant at the hard stuff, unreliable at the easy stuff. It can synthesise 30,000 news articles into a coherent narrative but lose the thread of a three-message conversation. It can produce strategic analysis that would impress a CEO and then misspell their name.

That's the perfect recipe for the monitoring problem. The impressive outputs build your trust. The mundane failures happen in exactly the places you've stopped checking.

Now, the stakes are obviously different. Nobody dies because an AI hallucinated a source in a research report. But the failure pattern is actually harder to deal with, not easier.

When a self-driving car crashes, everyone knows immediately. It triggers investigations, press coverage, recalls. The feedback loop is fast and loud. That's why the automotive industry confronted Level 3's problems relatively quickly.

AI errors at Level 3 are the opposite. They're frequent, subtle, and often invisible. A hallucinated statistic in a strategy document gets passed up the chain. A slightly wrong financial assumption compounds across a model. A legal brief with a fabricated citation gets filed. A campaign message goes out with a factual error nobody caught because the tone and strategy were brilliant.

The cost of each individual error might be small. But they accumulate quietly, in exactly the places where humans have disengaged. And because nobody crashes, nobody investigates. The pattern embeds itself before anyone realises it's there.

The automotive industry learned about Level 3's problems the hard way. The AI industry might not even realise it's learning them.

The dial, not the ladder

Here's the reframe.

The five levels aren't a staircase you climb. They're a dial you set differently for every single task. And sometimes the right move is to turn the dial down, not up.

The car industry proved this. Mercedes went from Level 3 back to Level 2++ and the experience got better. Waymo went to Level 4 but only in tightly bounded domains. The lesson wasn't "build more autonomy." It was "match the level to the context."

The same logic applies to AI.

Your email drafts? Let the system run. Crank the dial up. The cost of error is a slightly awkward email that you can correct with a follow-up.

Your legal filings? Keep it at Level 1. The AI handles the formatting and mechanical work. A human makes every substantive decision.

Your content calendar? Let the AI handle the first draft and scheduling, but a human reviews before anything goes live. That's not a compromise. That's the dial set correctly for the risk.

Your financial models? Level 2 at most. The AI analyses and recommends. A human checks the numbers and executes.

The right level isn't determined by what the AI is capable of. It's determined by two things: how much it matters if the AI gets it wrong, and how easy it is to undo the damage.

Jeff Bezos has talked about this in a different context. He calls them one-way doors and two-way doors. Most decisions are two-way doors, reversible, so delegate them freely. A few are one-way doors, irreversible, so think carefully. The same logic applies to AI autonomy.

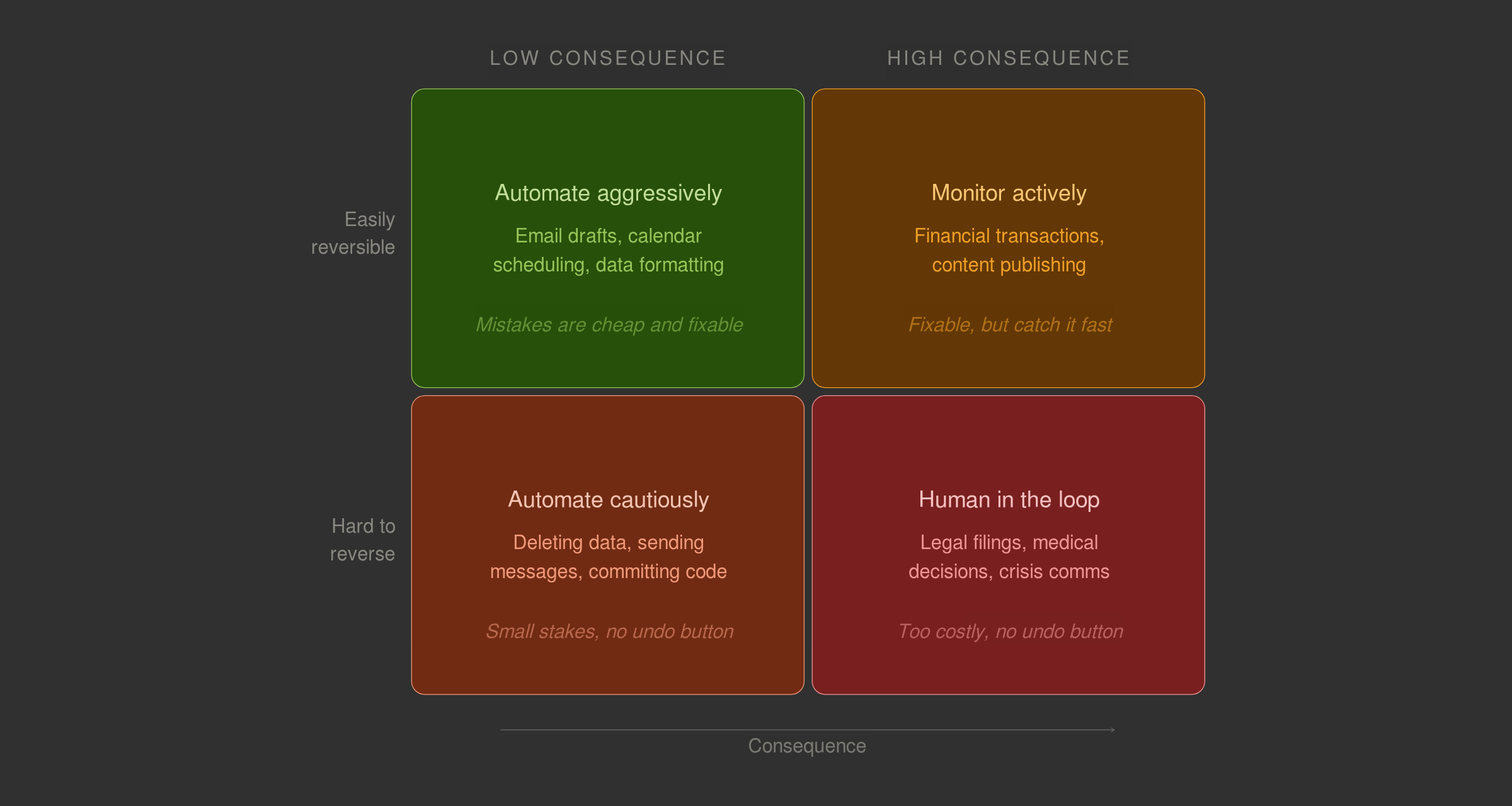

Put those two dimensions together and you get a simple matrix:

- Low consequence, easily reversible (email drafts, calendar scheduling, data formatting): automate aggressively. Mistakes are cheap and fixable.

- High consequence, easily reversible (financial transactions, content publishing): automate with active monitoring. You can fix it, but you want to catch it fast.

- Low consequence, hard to reverse (deleting data, sending messages, committing code): automate cautiously. Each individual mistake is small, but you can't take it back.

- High consequence, hard to reverse (legal filings, medical decisions, crisis communications): keep the human in the loop. The cost of error is too high and the undo button doesn't exist.

Commerce, where Stripe's framework was designed to live, naturally suits higher autonomy because most purchases can be reversed. The problems start when people take the same ladder and apply it to domains where the undo button doesn't exist.

That's four quadrants, not five levels. And a product should operate in all four simultaneously.

Human in the loop is a feature, not a bug

The deeper insight here is one that cuts against the grain of almost everything the AI industry is saying right now.

The assumption is that human involvement is friction. That every level of autonomy you climb removes a burden. That the destination is a world where humans set the objectives and AI handles everything else.

But the car industry discovered something that should give the AI industry pause. For many tasks, Level 2 isn't just safer than Level 3. It's better. The user experience of working actively with a capable system, staying engaged, maintaining judgment, is often a better outcome than passively monitoring one.

This matches everything I've seen using AI tools daily over the past two years. The best work doesn't come from letting the AI run and scanning the output. It comes from working with it. Pushing back when it's wrong. Giving it context it's missing. Recognising when the output is brilliant and when it's generic nonsense. That's what I've previously called taste: the human judgment that makes AI output trustworthy.

That judgment isn't friction to be eliminated. It's the thing that makes the output good.

At Topham Guerin we think about this constantly. The question is never "how much can we automate?" It's "where does this specific task sit on the matrix?" Research synthesis for a blog post is a very different risk profile to a client-facing strategy document. A draft social media calendar is a very different risk profile to a crisis communications response. And for the high-stakes work, keeping humans actively engaged isn't a compromise. It's the whole point.

The competitive advantage isn't capability. It's judgment.

The Collison brothers built a genuinely brilliant framework. Five clean levels. A clear progression. A useful way to think about where your product sits and where it's heading.

But the smartest thing you can do with that framework is resist the temptation to read it as a one-way journey from 1 to 5.

The automotive industry spent billions learning that more autonomy isn't always better. Mercedes and BMW shipped Level 3, tried it in the real world, and went back to Level 2. Waymo went to Level 4 but only inside tightly bounded domains. Nobody stayed in the middle. The dead zone emptied out.

The AI industry has the chance to learn that lesson without paying the same price. Whether it will is another question. Right now the race is to remove humans from the loop as fast as possible. But the evidence from the one industry that's been through this before suggests the opposite: for most tasks, the human in the loop is what makes the system work.

The winners won't be the companies with the smartest model. They'll be the ones that know when not to use it.

Enjoyed this? I write occasionally about politics, tech, and media.